Building a Template-First Research Agent

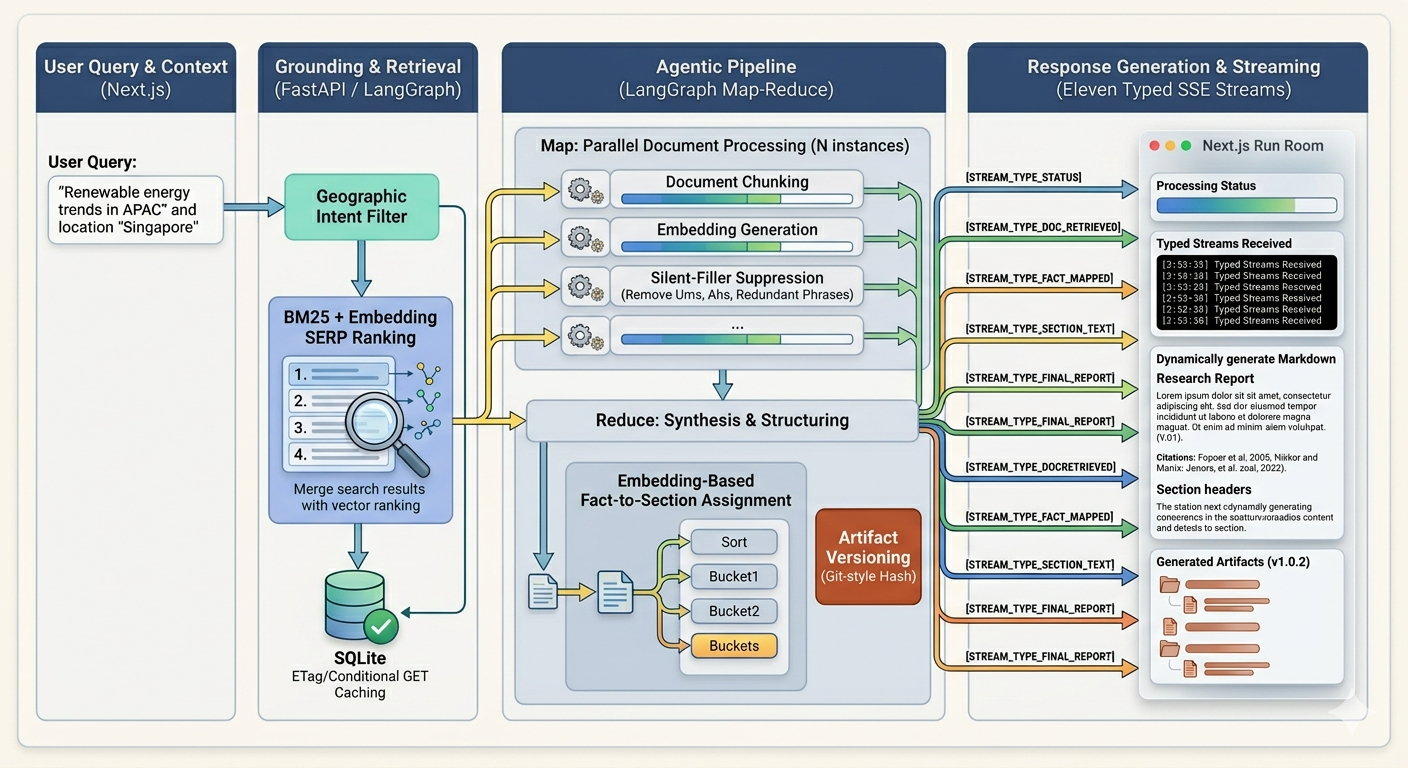

LangGraph grounded web pipeline: retrieval, grounding, geographic ranking, SSE events, SQLite cache.

Raghav

Author

TLDR;

Notes on grounding, map-reduce, and the small lies LLMs tell when you don't watch them

I've spent the last few days building research-agent: a playground where you pick a report template: market brief, investment memo — type a question into a box, and watch a LangGraph pipeline plan sub-questions, scrape the open web, extract facts, and synthesize a cited report in front of you. Live SSE updates, a React Flow topology view, the works.

The interesting parts of this project were not the parts I expected. The plumbing — FastAPI, LangGraph, Next.js — went together quickly. The hard problems were the ones that only surface once a real pipeline is shoveling real web content into a real LLM: planner shortcuts that quietly skip half your template, retrieval that confidently brings back the wrong country, synthesis that writes beautiful paragraphs grounded in nothing.

This post is a walk through the architecture, with the design decisions framed honestly. Some of them are good. Some are workarounds. I'll mark which is which.

Why template-first

Most "research agent" demos I've seen are open-ended: ask a question, get a wall of prose, hope it answers what you wanted. That works for casual users. It is not how an analyst works.

An analyst opens a template. The template tells them which questions to answer — executive_summary, key_findings, market_landscape, risks. The structure constrains the work. It also makes the work auditable: did we cover risks? Yes / no / not enough evidence.

So research-agent is template-first. A TemplateRegistry defines ResearchTemplate objects, each with a list of SectionSpecs. A section spec has an id, a title, a description, a required flag, and a min_evidence threshold. Two templates ship in the repo:

market_brief:

executive_summary(req, min_ev=1),key_findings(req, min_ev=1),market_landscape(opt, min_ev=1),data_notes(opt, min_ev=1)investment_memo:

thesis(req),bull_base_bear(req),competitors(opt),funding_landscape(opt),risks(req, min_ev=0)

This single registry is the spine of the system. The planner reads it. Synthesis reads it. Coverage validation reads it. The frontend reads it. Whenever the system needs to know "what does done look like?", the answer comes from the template.

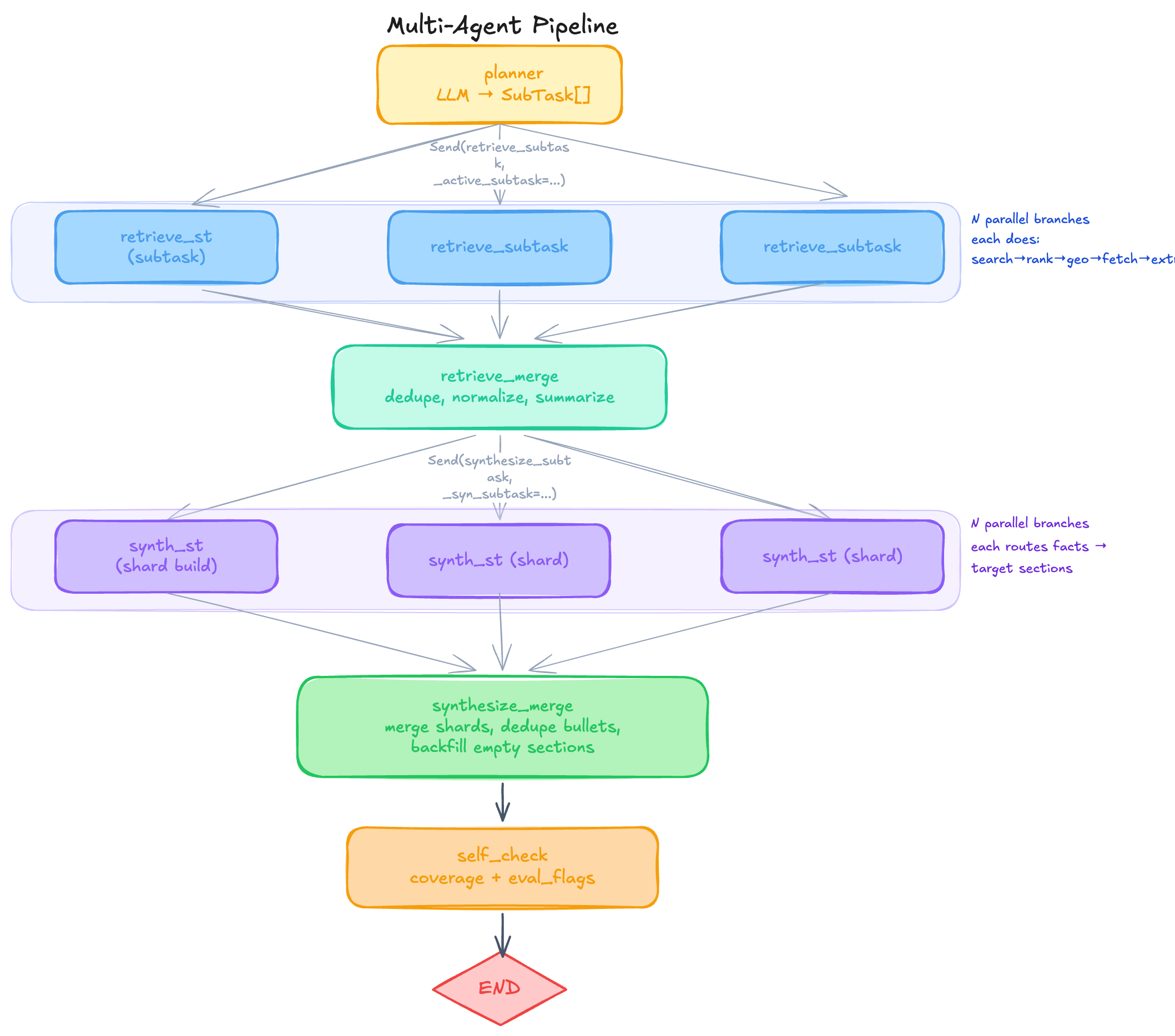

The pipeline, in one diagram

The orchestration lives in backend/app/graph/pipeline.py as a LangGraph state machine. It's a planner → fan-out → merge → fan-out → merge → self-check pattern:

The Send calls are LangGraph's fan-out primitive — they spawn an N-way map step where each branch sees a slice of state via an ephemeral key (_active_subtask, _syn_subtask). The merge nodes consume the parallel results through reducer lists that use operator.add to accumulate across branches.

Two map-reduce stages, in series. That structure is doing a lot of work, so it's worth saying why.

Why map-reduce, twice

The naive shape of a research agent is sequential: pick the next sub-question, search, fetch, extract, write a paragraph, repeat. That's fine until you have eight sub-questions and each one wants to fetch four pages. Now you're waiting forty seconds for IO that could have happened in eight.

Map-reduce in retrieve_* is mostly about latency. The eight sub-tasks fan out, each runs DDGS searches in a thread pool, fetches pages via async httpx, and emits Evidence rows tagged with origin_subtask_id. They merge back into a single evidences list via an additive reducer.

Map-reduce in synthesize_* is about something subtler. After retrieval, you have a big pile of evidences. Naively you could hand all of them to one synthesis call: "here are 80 facts, please write the sections." That doesn't scale, and worse, it loses the origin signal — the fact that this evidence was retrieved for this sub-question is information you want to preserve when routing facts to template sections.

So synthesis fans out per sub-task. Each branch calls build_subtask_shard, which keyword-routes its own evidences into the target_sections the planner assigned. Orphan facts (no section matched) get distributed round-robin so they don't get dropped. Each branch produces a small shard: {section_id: [bullet, bullet, ...]}. synthesize_merge then collapses shards across branches, dedupes bullets, and hands the result off.

The parallelism is real, but the bigger win is locality. Each branch reasons about a coherent slice of evidence.

The "NOT FOUND" problem

The first time I ran the full pipeline end-to-end on a real query — "data center buildout in Nepal" — I got a beautiful market_landscape section and the words NOT FOUND in every other section. executive_summary: NOT FOUND. key_findings: NOT FOUND.

The planner had returned three sub-tasks. All three had target_sections = ["market_landscape"]. The LLM, given the freedom to pick targets, had picked the one section that sounded most "research-y" and ignored the rest. Synthesis dutifully routed all evidence into market_landscape. The other sections, having no facts, were flagged not_found and short-circuited.

The fix is _ensure_core_sections_baked in planner.py. After the LLM returns its plan, we audit the union of target_sections across all sub-tasks. If a required core section (executive_summary, key_findings, thesis) is missing, we inject it into the first sub-task's targets. The planner can still propose a thoughtful distribution; we just guarantee that the load-bearing sections always have at least one routing path.

It's a guardrail, not a substitute for a smarter planner prompt. But it's the kind of guardrail you only learn you need by running the thing.

There's a second half to this fix in synthesis. assign_evidences_for_template runs as a backfill: after shard merge, if any section is still empty and the template says it shouldn't be, we look at all evidences and try to fill the gap. Two modes:

score = 0.74 * cos(fact_embedding, section_embedding) \

+ 0.26 * cos(fact_embedding, query_embedding)When SYNTHESIS_EMBEDDING_ASSIGN=1 and an API key is available, we embed both facts and section descriptions and score each fact against each empty section. Otherwise we fall back to a keyword heuristic over the section title and description.

Embeddings vs keywords: a real tradeoff

I want to be honest that the embedding path is not obviously better.

The keyword path is fast, free, and explainable. If you ask why a fact about "venture funding" landed in funding_landscape, the answer is: the word "funding" matched. That's a trace you can show to a user.

The embedding path costs an OpenRouter call per run, is non-deterministic across model versions, and produces assignments that are harder to defend. It also handles the cases keywords miss — a fact about "Series B rounds raised by Southeast Asian colocation operators" embeds close to funding_landscape without sharing any obvious keyword.

The weighting 0.74 / 0.26 is a hand-tuned mix of "how well does this fact match the section?" with a smaller term for "how well does this fact match the original query?". The query term acts as a relevance prior — it discourages off-topic facts from being shoved into a half-empty section just to fill space.

Right now, embedding-assign is opt-in via env flag. I think that's the right default. Heuristics first, embeddings as an upgrade path.

Geographic divergence: the Malaysia problem

Here is a failure mode that took me a while to even see. Query: "Nepal data center investment". The retrieval ranker happily surfaced a long, well-written article about a Malaysian hyperscale buildout. BM25-lite loved it: "data center", "investment", "hyperscale" — all present. Domain trust was high. Recency was good. The article got rank 2 and was fetched.

The article had nothing to do with Nepal.

This is a class of bug that pure lexical ranking will never solve: the words match, the relevance is high, but the referent is wrong. Embeddings help a little, but not reliably; an article densely about "hyperscale data centers" embeds close to a query about "hyperscale data centers" regardless of geography.

The fix lives in retrieve_intent.py as geographic_divergence_penalty. It is dumb and effective:

A

_GEO_LEXICONenumerates countries, major cities, regions.Tokenize both the query and the candidate hit's title+snippet with word boundaries.

If the query mentions a geo and the hit mentions a different geo, subtract a scaled penalty from the rank score.

There's no penalty if the query has no geo. There's no penalty if the hit has no geo. The penalty only fires on divergence. It is controlled by RETRIEVAL_GEO_PENALTY_WEIGHT so I can dial it down if it gets too aggressive.

The lesson generalizes: a lexicon-and-rules layer on top of a probabilistic ranker is often the cleanest way to encode "I know something the ranker doesn't." Don't reach for embeddings or fine-tuning when a 200-line word list will do.

Retrieval, the rest of it

The full retrieval stack, in order:

search_web_hits— DDGS calls in a thread pool, configurable per-subtask query count.Rank — BM25-lite over title+snippet, thin-snippet penalty, domain trust boost/downgrade, recency bonus from

ddgs_datetime, title-overlap term.Geographic penalty — as above.

Optional embedding rerank —

RERANK_USE_EMBEDDINGS=1runs a second pass.Canonical dedupe —

dedupe_hits_canonicalcollapses URLs byurl_normalize-derived key.fetch_page— httpx GET with cache (more below), size cap, PDF branch viapypdfor HTML branch via BeautifulSoup with main/article extraction and a paywall heuristic.naive_extract_facts— produceEvidencerows withorigin_subtask_idand aSourceRef.Near-identical dedupe —

dedupe_evidence_near_identicalfingerprints by(quote, fact, URL host)and keeps the highest-scored duplicate.

The cross-branch coordination is interesting. Each retrieval branch is its own coroutine, but they share a RetrievalCoordinator: an asyncio.Lock guarding a global max_pages budget and a canonical-URL set, and an asyncio.Semaphore capping total IO concurrency. Without this, eight parallel branches would each independently fetch the same trending article, blow past the page budget, and waste both bandwidth and the user's patience.

Fetch cache and conditional GET

fetch_cache.py is a SQLite table keyed by the canonical URL hash. Each row carries the raw bytes, extracted text, an extract_schema_version, and a TTL. When FETCH_CACHE_ENABLED=1:

On a cache hit within TTL: skip network, return cached extracted text.

On a near-expiry hit: send

If-Modified-Since/If-None-Matchheaders. If the server returns304, refresh the row's TTL and reuse cached text.On

extract_schema_versionmismatch: invalidate. If I change how I parse HTML, old cache rows shouldn't poison new runs.

Two things make this work in practice. First, url_normalize.py is aggressive: strip fragments, drop tracking params (utm_*, gclid, fbclid), sort query keys, lowercase scheme and host, SHA-256 the result. Without aggressive normalization, the cache hit rate collapses — every UTM-tagged tweet links to "a different page" as far as the cache is concerned.

Second, the cache is a flat key-value table, not a content store. I deliberately did not build a content-addressed store with deduplication. A research agent isn't a crawler; the cache exists to make iteration on the pipeline cheap, not to be a long-term archive.

Synthesis, grounding, and block_silent_filler

The synthesis step is where LLMs are most tempted to lie.

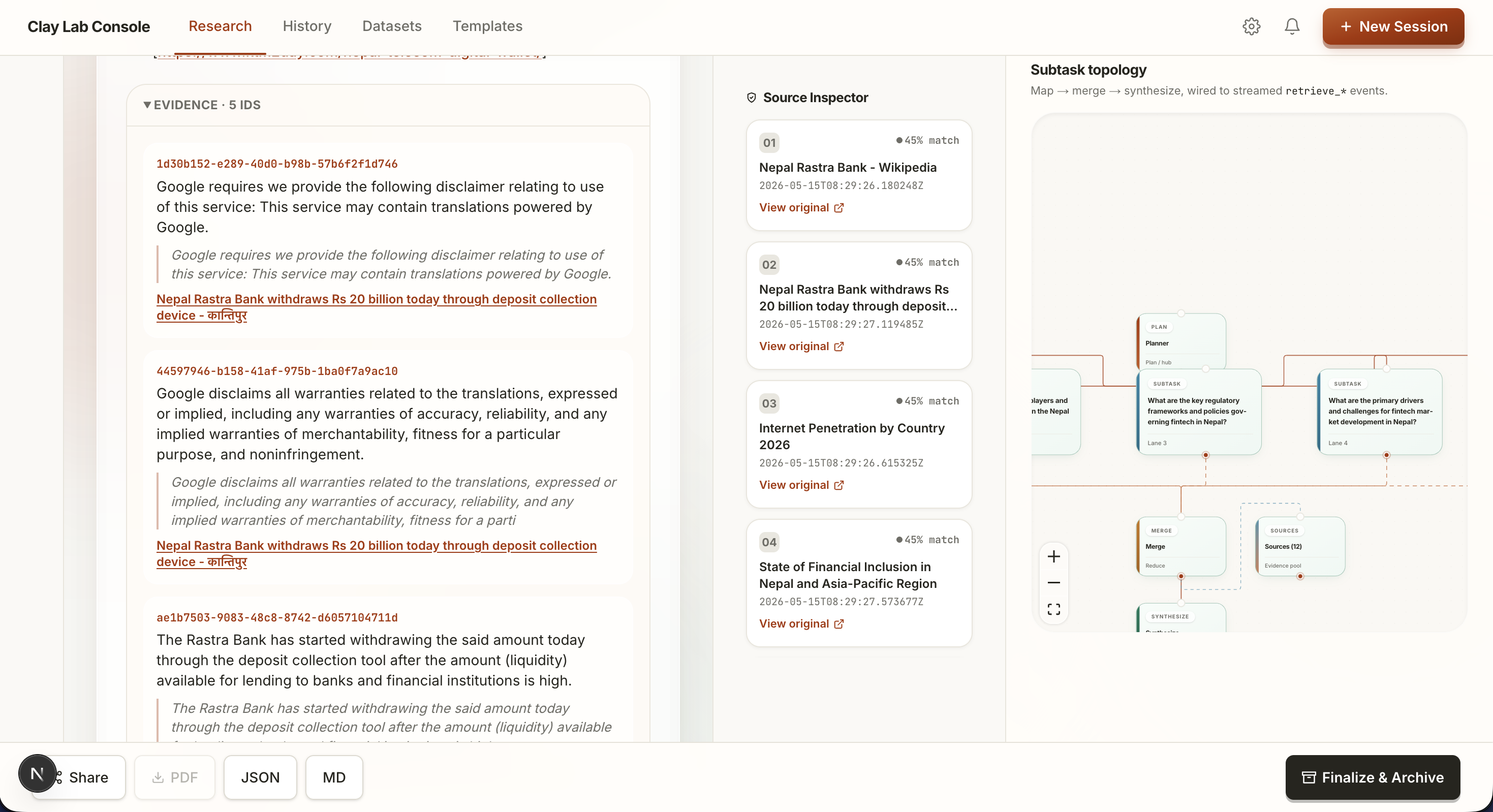

The pattern I settled on: synthesis writes bullet-style sentences, each annotated with an [evidence:UUID] anchor pointing to the evidence row it was derived from. The synthesis prompt is explicit that every claim must carry an anchor and that no new facts should be introduced.

optionally_llm_refine_sections is a second, optional LLM pass that rewrites the bullets into prose. It is heavily gated: it must preserve every [evidence:UUID] token, and a post-check rejects any rewrite that strips anchors or introduces sentences without anchors. If the rewrite fails validation, we keep the original bullets.

Then there's block_silent_filler. This one runs in validation.py after synthesis and is the single most important grounding guard. The logic: for each section, count the evidences actually wired in. If the section's body contains substantially more content than the evidences support — measured roughly by characters-per-evidence — flag the surplus as blocked_reason="silent_filler" and suppress it from the final output.

The motivation is the most common LLM failure mode in this space: given two sparse facts, the model writes a confident two-paragraph narrative around them, padding the gaps with plausible-sounding generalities. The user sees flowing prose and trusts it. The right behavior is to ship the two facts and a thin_evidence ribbon, not the prose.

The uncertainty taxonomy mirrors this:

supported— has enough evidence permin_evidence.thin_evidence— has some, below threshold.not_found— none.stale— present but old enough that recency penalties dominated.

The frontend renders this taxonomy as a ribbon on each ReportCanvas ClayCard. Nobody pretends they got an answer they didn't.

SSE streaming and the client-side reducer

Streaming is where the system stops feeling like a script and starts feeling like a product.

POST /v1/research/stream opens a Server-Sent Events stream and emits eleven events in order:

run_started → planner_complete →

retrieve_map_started → retrieve_map_progress (×N) →

retrieve_merge_complete → retrieve_complete →

synthesize_map_progress (×N) → synthesize_merge_complete →

self_check_complete → synthesize_complete →

complete (full report + metrics + pipeline_activity)Each *_progress event carries a per-subtask delta: status, queries used, pages fetched, evidences emitted.

On the client, ResearchRunContext.tsx holds central state and parses the SSE stream. Naively, each progress event would dispatch a full state replacement. That works but causes React to re-render the entire run room twelve times a second when retrieval is hot, and worse, can drop intermediate state if events arrive out of order.

The fix is a local _merge_stream_delta reducer on the client. It owns the rules for how a partial delta merges into the in-memory pipeline activity: subtask statuses upgrade monotonically (pending → running → done), evidence counters accumulate, the latest non-empty status string wins. The reducer is pure and lives in the context module so the components consuming it stay dumb.

The reason this matters: the SSE stream is the public live shape of the pipeline. The reducer is what makes that shape correct on the client. They are two sides of the same protocol, and separating them was worth the complexity.

The artifact store and re-entry

Live SSE is great while you're watching. It's useless thirty seconds later when you've closed the tab.

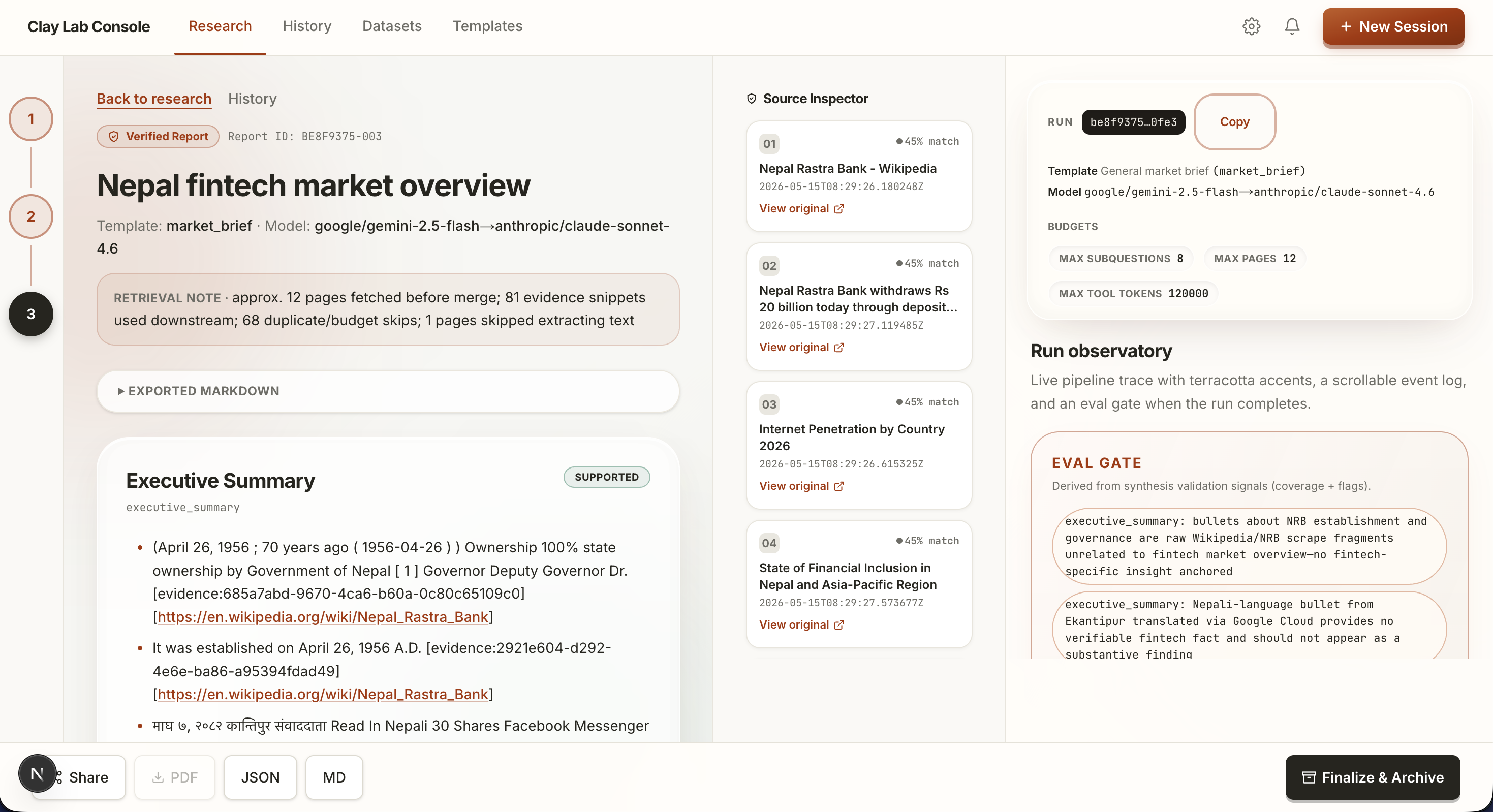

So every run persists. The research_runs table holds the run UUID, template_id, query, metrics, and budgets. The artifact_versions table holds versioned snapshots: every meaningful state change writes a new row with a monotonic version number, the full report JSON, and rendered markdown.

GET /v1/research/{run_id} returns the latest artifact. The Next.js reports/[runId]/page.tsx route checks: do we have a live ResearchRunContext for this ID? If yes, render from that. If no, fetch the artifact and hydrate. Same components either way.

This dual-mode rendering — live or replayed — fell out of the design naturally and I'm pleased with it. The frontend doesn't know or care whether you're watching a run unfold or reading one from yesterday.

The frontend, briefly

I won't dwell on the UI since it's not really the story, but a few notes:

ReportCanvas renders each section as a ClayCard with an uncertainty ribbon, expandable evidence cards, and coverage notes.

SourceInspector shows the canonical source list with snippets and fetch metadata — what the agent actually opened, in order.

RunObservatory wraps

PipelineTimelineandSubtaskTopologyFlowCanvas. The topology view is a React Flow graph whose nodes mirror the LangGraph topology: planner → fan-out → branches → merge → fan-out → branches → merge → self-check. Live status colors flow through as the SSE events arrive.

The whole thing is dev-wired via NEXT_PUBLIC_API_URL. There's no fancy auth, no deploy story. That's deliberate; this is a research playground, not a SaaS.

Configuration as policy

A lot of the system's behavior is gated behind env vars. This is intentional. Each flag corresponds to a design choice I wanted to be able to toggle without code edits:

Flag

What it gates

STUB_LLM

Disable all LLM calls; planner falls back to _stub_plan.

RERANK_USE_EMBEDDINGS

Second-pass embedding rerank on hits.

RETRIEVAL_GEO_PENALTY_WEIGHT

Severity of the Nepal-vs-Malaysia penalty.

SYNTHESIS_EMBEDDING_ASSIGN

Embedding-based section assignment vs keyword.

SYNTH_USE_LLM

LLM refinement pass over synthesized bullets.

SELF_CHECK_USE_LLM

LLM critique of coverage flags.

FETCH_CACHE_ENABLED

The SQLite fetch cache.

LANGSMITH_TRACING

LangSmith trace upload.

max_subquestions, max_pages_fetched, max_queries_per_subtask

Budget caps.

Treating these as policy rather than configuration was useful. When something goes wrong, the first debugging move is "flip the flag and rerun." When something works, the question becomes "do I want this on by default?" — and the answer is documented in the flag's default value.

What's still rough

A few honest pieces of unfinished business:

Planner quality. The plan is still a single LLM call with a stiff prompt.

_ensure_core_sections_bakedsaves us from the obvious failure but doesn't make the plan good. A reflection step — "look at the template, look at your sub-tasks, do they actually cover it?" — is the obvious next move.Fact extraction is naive.

naive_extract_factsis genuinely naive: it lifts sentences that look fact-shaped via simple heuristics. A small extraction model would lift quality a lot.No cross-section reasoning. Each section is synthesized in isolation.

executive_summaryandkey_findingsshould arguably be downstream of the other sections, summarizing them. Right now they get parallel routing like everything else.Recency is heuristic. We use DDG's reported datetime when present. When absent, we have nothing. A real system would parse publication metadata from fetched pages.

The geo lexicon is hand-curated. It works for the queries I test with. It does not cover the world. A real solution would use a gazetteer or a named-entity model.

Self-check is shallow.

heuristic_self_checkis mostly a coverage audit.optional_llm_critique_flagsis a thin LLM second opinion. Neither catches subtle hallucinations the way a real adversarial reviewer would.

The thing I keep coming back to

The most interesting design decisions in this project were not architectural. They were about distrust: distrust the planner enough to bake in core sections, distrust the ranker enough to add a geo penalty, distrust the synthesizer enough to block silent filler, distrust the cache enough to version the extract schema.

LLM systems work when you assume every component will eventually lie to you in a small, plausible way, and then you build a guard that turns the lie into a flag. The guards individually are cheap. Together they're the difference between a demo that looks great on the happy path and a system you can hand to someone and let them ask whatever they want.

If you want to look at the code, the repository has backend/app/graph/pipeline.py as a good entry point, then the services under backend/app/services/. The shape will be familiar within an hour. The interesting commits are the ones that fix a failure mode I didn't see coming. Those are the ones worth reading first.